Protecting digital data and tackling deepfakes early

Key findings:

- The chip system cryptographically signs data at the moment it is generated within the sensor.

- This creates a verifiable link between the recorded data and the physical device that captured it.

- The approach enables detection of tampering and helps distinguish real recordings from artificial ones.

- In principle, the technology can be integrated into a wide range of sensors, including cameras, smartphones, and scientific devices.

Artificial intelligence is making it increasingly easy to create convincing fake data, such as images, videos, and audio. As a result, trust in digital content, from social media posts to scientific data, is under growing pressure.

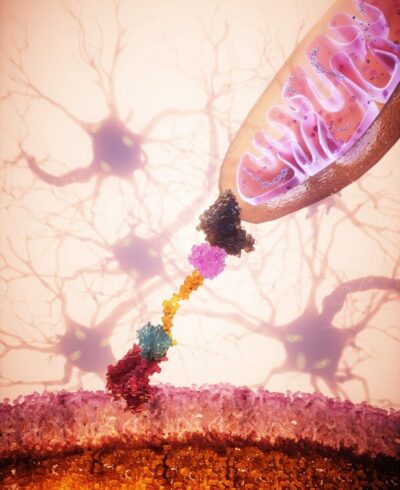

Researchers from the Institute of Molecular and Clinical Ophthalmology Basel (IOB), Andreas Hierlemann’s lab at ETH Zurich, and collaborators have developed a sensor technology that addresses the problem at its root. Their system ensures that data is secured at the exact moment it is created, making undetectable manipulation extremely difficult.

Securing data at the moment of capture

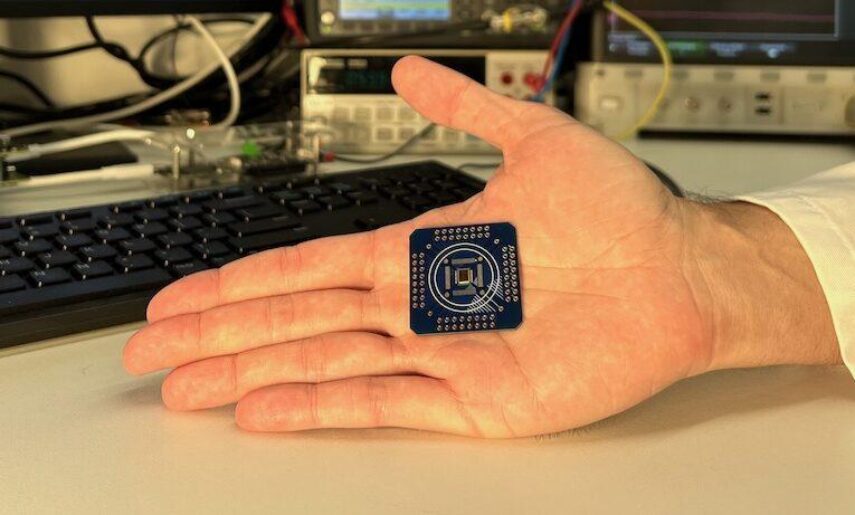

At the core of the innovation is a new type of sensor chip. Unlike conventional systems, which protect data only after it has been recorded, this chip embeds security directly into the sensing process.

As data is captured, the chip immediately generates a unique digital fingerprint, known as a “hash”, and encrypts it using a secure key stored within the hardware. This creates a signature that proves where the data comes from and whether it has been altered.

Because this happens entirely inside the chip, there is no opportunity to tamper with the data before it is secured.

A built-in guarantee of authenticity

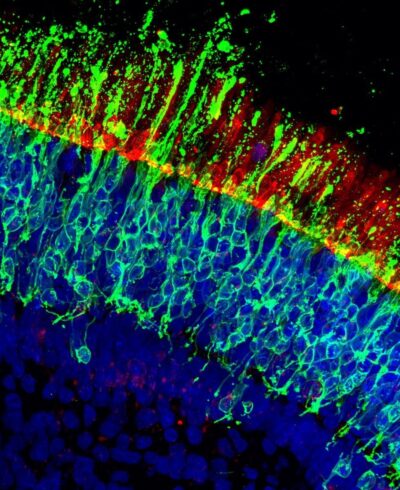

The researchers demonstrated the concept using a prototype sensor that records electrical signals from heart cells. Each data point can be traced back to the exact device that produced it.

This means authenticity no longer depends on trusting software, platforms, or users. Instead, it is anchored in the physical device itself.

“We wanted to show that the rise of deepfakes is not something society simply has to accept. With the right hardware-based approach, authenticity can be built in at the point where data is captured” FELIX FRANKE, Head of the Quantitative Visual Physiology Group and senior co-author

A potential game changer

The implications are far-reaching. Cameras could automatically certify genuine images. Journalists and courts could verify evidence independently. Scientific data could remain reliable, even as AI-generated content becomes more widespread.

While the current system is a prototype, the researchers believe it can be scaled and integrated into our everyday devices.

Scientific contact:

Felix Franke, Head of the Quantitative Visual Physiology Group

E-Mail: felix.franke@iob.ch

Fernando Cardes García, ETH Zurich, Professorship for Biosystems Engineering

E-Mail: fernando.cardes@bsse.ethz.ch

Illustration: © Caroline Arndt Foppa / ETH Zürich

The work was financially supported by the Swiss National Science Foundation (SNSF) and the State Secretariat for Education, Research and Innovation (SBFI) through the SwissChips initiative.

Original Publication

In-sensor cryptographic signature generation for linking a physical process and an immutable digital entity

Fernando Cardes, Sebastian Bürgel, Xinyue Yuan, Qianchen Yu, Arianna Rubino, Jihyun Lee, Raziyeh Bounik, Vijay Viswam, Andreas Hierlemann and Felix Franke

Nature Electronics. 2026

DOI: https://doi.org/10.1038/s41928-026-01593-5